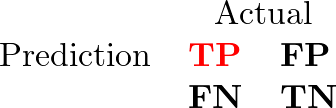

Considering a system for diagnosing disease, these evaluation terms can be described with the rates in a confusion matrix.

The four cells are:

- TP: true positives, people who have the disease and are predicted to have it.

- FP: false positives, healthy people who are predicted to have the disease.

- FN: false negatives, people who have the disease but are predicted to be healthy.

- TN: true negatives, healthy people who are predicted to be healthy.

Table of Contents

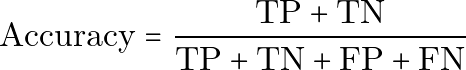

Accuracy

Accuracy is the rate of correctly predicted people among all people.

In plain text:

Accuracy = (TP + TN) / (TP + TN + FP + FN)Accuracy is easy to understand, but it can be misleading when the classes are imbalanced. For example, if only 1% of people have a disease, a model that predicts everyone as healthy can still reach 99% accuracy while failing to find any true cases.

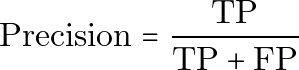

Precision

Precision is the rate of people who really have the disease among all people predicted to have the disease.

In plain text:

Precision = TP / (TP + FP)High precision means that when the system predicts disease, that prediction is usually correct. Precision is especially important when false positives are costly.

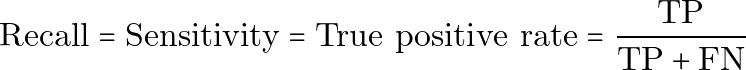

Recall, Sensitivity, and True Positive Rate

Recall, also called sensitivity or the true positive rate, measures how many actual positive cases are found by the model.

In plain text:

Recall = Sensitivity = True positive rate = TP / (TP + FN)High recall means the system misses fewer people who actually have the disease. Recall is especially important when false negatives are dangerous.

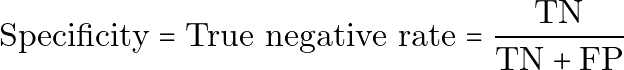

Specificity

Specificity, also called the true negative rate, measures how many healthy people are correctly identified as healthy.

In plain text:

Specificity = True negative rate = TN / (TN + FP)High specificity means the system avoids incorrectly labeling healthy people as diseased.

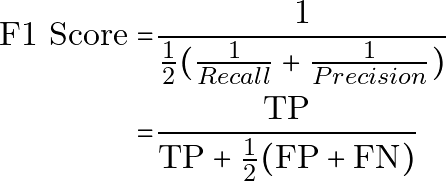

F1 Score

The F1 score combines precision and recall into one metric. It is the harmonic mean of precision and recall.

In plain text:

F1 Score = 1 / ((1 / 2) * ((1 / Recall) + (1 / Precision)))

= TP / (TP + (1 / 2) * (FP + FN))Equivalently:

F1 Score = 2 * Precision * Recall / (Precision + Recall)The F1 score is useful when both false positives and false negatives matter, especially with imbalanced classes. It does not include true negatives directly, so it should be interpreted together with the confusion matrix and the actual cost of each kind of error.